Top 10 AI Development Trends That Will Transform Businesses in 2026

Top 10 AI Development Trends That Will Transform Businesses in 2026 Artificial intelligence is no longer something companies experiment with on the side. In 2026, AI sits at the center of how modern businesses operate, compete, and grow. From automating complex workflows to shaping customer experiences and decision-making, AI has crossed the line from emerging technology to business infrastructure. What makes this moment different is scale. AI is no longer limited to innovation teams or isolated pilots. It is being embedded into products, operations, security, finance, and strategy. Companies that understand where AI is heading this year will not just keep up. They will set the pace. Market size and growth projections The global AI market was valued at around $371.71 billion in 2025 and is projected to exceed $2.4 trillion by 2032, with a CAGR of around 30%+ from 2025 onward. Industry analysts also estimate the AI market may expand toward $1.8 trillion by 2030, reflecting strong, sustained growth across software and services. A related forecast shows the AI-as-a-service segment alone growing at a CAGR of ~36% through 2030, indicating enterprise demand for scalable AI capabilities. Together, these figures demonstrate that AI is among the fastest-growing technology categories. Enterprise adoption and usage trends A recent analysis indicates 67% of organizations are increasing investments in generative AI technologies, with widespread use of large language model tools across business functions. Other data suggests 52% of large organizations have dedicated AI adoption teams, and many are actively progressing beyond pilot stages into production use. What these stats mean These sources collectively show that: AI market valuation has entered the hundreds of billions range in 2025, with multi-trillion forecasts ahead. Growth rates (CAGR) for AI and related segments remain in the 20–35%+ range. Enterprise adoption is widely established — not experimental — with many companies transitioning from pilots to production systems. 1) Autonomous and Agentic AI The era of AI as a passive assistant is ending. In 2026, businesses are adopting agentic AI—models that plan, make decisions, trigger actions, and coordinate across systems with minimal human supervision. These are not simple scripts or rule-based bots. Agentic systems can: Understand multi-step workflows Operate across apps and databases Adapt when outcomes differ from expectations Real-world advantage: Companies use agents to automate cross-system processes like contract reviews, supply chain adjustments, and end-to-end customer lifecycle tasks. Example: An AI agent that routes a sales lead through qualification, drafts personalized outreach, schedules demos, and updates pipeline forecasts automatically, freeing sales teams to close instead of coordinating. 2) Vertical and Industry-Specific AI Businesses are moving beyond generic AI models toward systems built specifically for their industry, data, and regulatory environment. Vertical AI solutions are trained on domain-specific datasets and workflows, allowing them to understand specialized terminology, compliance requirements, and operational patterns. These systems can: Deliver higher accuracy in complex domains Reduce regulatory and compliance risk Generate insights that generic models often miss Real-world advantage: Organizations gain AI systems that behave like subject-matter experts rather than general assistants. Example: A healthcare provider deploys an AI model trained on radiology images and clinical records to support diagnosis while maintaining regulatory compliance. 3) AI Operationalization and LLMops As AI adoption grows, managing models in production has become just as important as building them. LLMops focuses on monitoring, maintaining, and improving large language models throughout their lifecycle. Modern AI operations platforms can: Track model performance and accuracy Detect data and behavior drift Automate retraining and version control Real-world advantage: Businesses avoid silent failures and ensure AI systems remain reliable as data and user behavior evolve. Example: A customer support chatbot that automatically retrains monthly using new ticket data and alerts engineers if response quality declines. 4) Ethical AI, Governance, and Compliance AI systems increasingly influence financial decisions, hiring, medical diagnoses, and legal processes, making governance unavoidable. Organizations are implementing structured AI governance frameworks to manage risk, transparency, and accountability. These frameworks help companies: Document training data sources Explain model decisions Control bias and unfair outcomes Real-world advantage: Businesses protect themselves from legal exposure while building customer and regulator trust. Example: A bank maintains a full audit trail for every AI-driven credit approval or rejection decision. 5) Multimodal AI Experiences AI is no longer limited to text input and output. In 2026, leading systems understand and combine text, images, audio, and structured data. This allows users to interact with AI in more natural and efficient ways. Multimodal AI systems can: Interpret visual information Process voice commands Combine multiple data types for a deeper context Real-world advantage: Teams solve real-world problems faster using richer, more intuitive interfaces. Example: A field technician uploads a photo of damaged equipment and receives spoken repair instructions generated by the AI system. 6) AI-Driven Software Development AI has become a core part of the modern software development lifecycle. Developers use AI tools to accelerate coding, testing, documentation, and debugging. These systems can: Generate functional code blocks Detect security vulnerabilities Suggest system architecture improvements Real-world advantage: Engineering teams deliver products faster with fewer defects. Example: A SaaS company reduces feature development time by 40% by using AI-generated scaffolding and automated test creation. 7) Responsible AI and Safety Engineering As AI systems take on critical responsibilities, companies are embedding safety checks directly into development workflows. Responsible AI practices focus on preventing harmful behavior before it reaches users. These practices include: Bias detection testing Hallucination monitoring Human review for sensitive decisions Real-world advantage: Organizations prevent large-scale mistakes and preserve public trust. Example: An AI-powered recruitment system flags borderline candidate rankings for human verification before final decisions are made. 8) AI-Powered Cybersecurity Cybersecurity is becoming an AI-versus-AI battlefield. Businesses are deploying machine learning models to detect attacks faster than traditional security tools. These systems can: Identify unusual network behavior Predict breach patterns Automatically isolate threats Real-world advantage: Security teams respond to incidents in seconds instead of hours. Example: An AI system blocks a coordinated phishing attempt after detecting abnormal email behavior patterns across departments. 9) Cost-Efficient and Sustainable AI AI systems consume significant

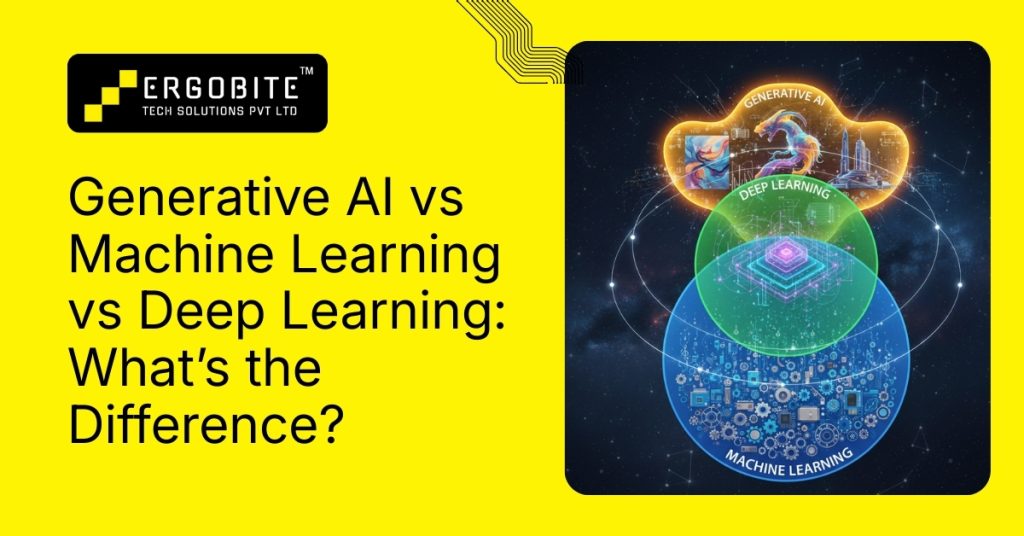

Generative AI vs Machine Learning vs Deep Learning: What’s the Difference?

Generative AI Vs Machine Learning Vs Deep Learning: What’s the Difference? Artificial intelligence has become one of the most overused terms in modern technology. It shows up in marketing decks, product descriptions, investor pitches, and news headlines, often without much clarity about what it actually refers to. Part of the confusion comes from the way three related, but very different technologies are grouped: machine learning, deep learning, and generative AI. They are connected. They build on one another. But they are not interchangeable. Understanding how they differ is not just useful for engineers. It affects how products are designed, how infrastructure is planned, how budgets are set, and what kind of results a system can realistically deliver. This guide breaks down each layer carefully, explains why it exists, what problems it solves, where it fails, and how all three fit into modern AI systems. The big picture: AI as a stack, not a single technology Artificial intelligence is best understood as a goal, not a specific technique. The goal is simple to describe but difficult to achieve: build systems that can perform tasks normally associated with human intelligence. Over time, different technical approaches have been developed to move closer to that goal. The most important of these approaches today form a clear hierarchy: Artificial Intelligence – the overall ambition Machine Learning – learning from data Deep Learning – neural networks for complex data Generative AI – creating new data and content You can think of them as layers: AI → Machine Learning → Deep Learning → Generative AI Each layer depends on the one below it. Generative AI would not exist without deep learning. Deep learning is a specific form of machine learning. And machine learning is the dominant way modern AI systems are built. Seeing this structure upfront makes everything else easier to understand. Machine Learning (ML): The foundation Machine learning is about teaching computers to learn from examples so they can make their own decisions or predictions. A simple way to understand this is to think about how children learn everyday concepts. If you show a child many pictures of apples and bananas and repeatedly say, “This is an apple,” and “This is a banana,” the child eventually learns to tell them apart without being given formal rules. Machine learning works similarly. We give computers large amounts of example data, and they learn patterns that help them make predictions about new data. This ability to learn from experience instead of fixed instructions is what makes machine learning the foundation of modern AI systems. How does machine learning work? Machine learning usually follows a clear process with a few key stages: Data collectionGather many examples, such as transaction records, customer activity logs, sensor readings, or product data. Data preparationClean the data by removing errors, fixing missing values, and adding labels where needed. Selecting an algorithm (model)Choose a model that fits the problem. Some models classify data, some predict numbers, and others find hidden patterns. Training phaseFeed the prepared data into the model so it can learn by adjusting itself to reduce mistakes. EvaluationTest the model using new data it has not seen before to check how accurate it is. DeploymentUse the model in real systems to make predictions on live data. Example: predicting delivery time for online orders Imagine training a system using 50,000 past deliveries, each with details such as: distance from the warehouse type of product time of day traffic level actual delivery time From this data, the model learns patterns such as: longer distances increase delivery time Rush-hour traffic causes delays Some product categories need extra handling time When a new order comes in, the system estimates how long delivery will take based on what it learned. No rules were written manually. The model learned them from data. Types of machine learning Supervised learningThe system is trained using labeled data where the correct answers are known. For example, customer transactions are labeled as “fraud” or “legitimate.” Unsupervised learningThe data has no labels. The system finds patterns by itself, such as grouping customers with similar buying behavior. Reinforcement learningThe system learns by trial and error using rewards and penalties, such as optimizing warehouse robots to choose the fastest paths. Real-world examples Fraud detection in digital payments Music and product recommendations on streaming and e-commerce platforms Inventory demand forecasting for retail chains Machine learning is powerful, but it does not understand meaning or context. It relies heavily on historical data and struggles with complex raw text, images, and sound without additional techniques. That limitation is what led to deep learning. Deep Learning: adding complexity and perception Deep learning is a type of machine learning that helps computers work with complex data such as images, text, audio, and video. It uses artificial neural networks inspired by how the human brain processes information. These networks consist of many connected layers, with each layer learning different features of the data. How does deep learning work? When a computer analyzes a satellite image: The first layer detects edges and color patterns The next layer identifies roads, rivers, and buildings The final layers recognize locations such as cities or industrial zones At first, the system makes many mistakes. With repeated feedback, it gradually becomes more accurate. Real-world examples of deep learning Voice assistants convert speech into text and understand commands Medical imaging systems detect tumors from scans Facial recognition is used in phone unlocking systems Deep learning allowed AI systems to move beyond numbers and tables and start understanding the real world visually and linguistically. However, it still focuses mainly on recognition and prediction. It does not naturally create new content. That is where generative AI comes in. Generative AI: creating something new Generative AI is a subset of deep learning that focuses on producing new content rather than only analyzing existing data. Instead of just recognizing patterns, these systems learn how data is structured and then use that knowledge to create new material such as text, images, music, or software code.

Top 10 Best Practices for Building Reliable AI Systems

Top 10 Best Practices for Building Reliable AI Systems AI systems deployed in real environments don’t fail like traditional software. They drift, hallucinate, respond unpredictably to data changes, or silently degrade over time. What this means is straightforward but often overlooked: reliability is engineering, not just accuracy. Without intentional design, testing, observability, and governance, even advanced models can become liabilities rather than assets. Here’s a structured approach that reflects real enterprise practices and addresses what engineering teams, leaders, and decision-makers actually need to build reliable AI solutions that scale. 1. Define Clear Goals and Success Metrics Specify success criteria as engineering requirements. Determine the acceptable accuracy range on live data, maximum latency (e.g., p95 response time), and uptime targets. Plan failure modes: Decide how the system should behave under partial failure (e.g, degraded output, cached answers) or when confidence is low. Align with stakeholders: Connect these metrics to business outcomes (customer satisfaction, cost savings). As one guide notes, linking AI metrics (error rates, inference time) to conversion rates, user feedback, or NPS helps prioritize issues that impact real outcomes. 2. Invest in Strong Data Foundations High-quality, representative data is the bedrock of reliability. “Reliable AI begins with reliable data” – poor or biased data guarantees failure regardless of model sophistication. Build robust data pipelines with these practices: Data validation & cleaning: Automate checks for missing values, schema violations, outliers, and duplicates before data reaches the model. Use versioning and lineage tools so you know exactly what data a model saw at training and live inference. Diversity and representativeness: Ensure training data covers the full range of real-world conditions. Include edge cases and rare scenarios to help the model generalize. Without this breadth, models may “work well for common cases while failing on less frequent but important situations”. Continuous updates: Regularly refresh datasets. Many domains shift (new slang, seasonality, market changes), so stale training data leads to “drift from reality”. Monitor incoming data freshness and retrain periodically to keep the model aligned with the current environment. 3. Architect for Modularity and Resilience Design your AI system as a collection of well-defined, interchangeable components rather than a monolith. Separate modules for data preprocessing, model inference, reasoning/agents, and tool integration make the system easier to test and evolve. Key practices include: Clear interfaces: Define strict inputs/outputs for each component (e.g., prompt formatting modules, processing pipelines). This “contract” ensures one change doesn’t silently break others. Redundancy and fallback: Build backups. For critical tasks, run a simple rule-based or alternate model in parallel. If the main AI falters or returns low-confidence output, fall back to a safer heuristic or escalate to a human. For example, cross-check outputs with hard-coded rules or a secondary validation model. Graceful degradation: Plan for failures. Implement timeouts and circuit breakers on external API calls. If a tool call fails repeatedly, switch to an alternative or pause that part of the workflow. By defaulting to safe behaviors (e.g., “I’m sorry, I cannot answer that”), the system avoids catastrophic crashes and keeps the user experience controlled. 4. Implement Comprehensive Observability You can’t fix what you can’t see. Instrument every layer of the system for real-time monitoring and logging. Track not just high-level accuracy, but distributions and anomalies: Model signals: Record input and output distributions, confidence scores, and error codes for each inference. Watch for shifts (e.g., sudden spikes in confidence or frequent “low confidence” flags) that indicate drift or unusual inputs. Data health: Continuously monitor data quality metrics – schema drift, missing fields, skew between training and live data. “Bad data means bad predictions,” as experts note; schema changes or noisy input should trigger alerts. Infrastructure metrics: Log system stats (CPU/GPU usage, latency percentiles, queue lengths) and API performance. Capture tracing logs across microservices so you can correlate a user request from the frontend through the AI pipeline. Business metrics: Tie model performance to KPIs. For instance, track how prediction quality affects task completion rates, user feedback, or financial metrics. This way, you “prioritize issues that threaten customer experience or business commitments”. Real-world practice shows that unified observability (metrics, logs, traces) mapped to business SLOs dramatically shortens incident response. When monitoring alerts on a missed target, automated dashboards should let engineers “see GPU utilization, data pipeline status, API error rates, and recent deploy changes” in one view. 5. Robust Error Handling and Fallbacks AI components must expect partial failures. Rather than crashing, the system should degrade predictably. Best practices include: Implicit timeouts and circuit breakers: If an external API or model call hangs or errors, fail quickly and retry later. After repeated failures, break the circuit and switch to a backup process or human review channel. Predefined alternative paths: For any single point of failure, have a secondary path. For example, if an expensive LLM fails, fall back to a smaller model or cached answer. If no plan can handle the request, gracefully return a safe default message or escalate. Human escalation points: Define confidence thresholds below which outputs go to a human for review. For high-stakes outputs (medical advice, financial decisions), integrate a “human-in-the-loop” step in your workflow. This prevents one unpredictable AI error from propagating through the system. These safeguards ensure that even in edge cases, the system “degrades predictably” instead of producing nonsense or causing downstream failures. 6. Layered Testing and Validation Testing AI systems goes beyond simple unit tests. Adopt a multi-level testing strategy: Unit tests: For data transformations and utility functions, use traditional tests to catch simple errors. Task-level tests: Validate components in isolation. For example, check that a preprocessing step normalizes text correctly, or that a prompt generator always formats queries as expected. End-to-end scenarios: Simulate user workflows. Run your AI agent or service on a suite of realistic tasks (including adversarial or boundary inputs) to see how the full pipeline performs. Regression and adversarial tests: Keep a library of tricky cases (outliers, malicious inputs) that have caused issues before, and re-run them whenever you change the model or code. Shadow and canary deployments: Test

Top 10 AI & ML Frameworks You Can’t Ignore in 2026

Top 10 AI & ML Frameworks You Can’t Ignore in 2026 The AI and machine learning ecosystem is evolving quickly. By 2026, models will be more complex, data volumes will be larger, and expectations from AI systems will be higher, not just in terms of accuracy, but also reliability, speed of deployment, and long-term maintainability. As a result, the tools used to build these systems have become a critical part of the decision-making process. The right foundation can shorten development cycles, reduce operational risk, and make it easier to scale AI applications as business needs grow. There is no universal solution that works for every project. Different approaches are needed depending on the type of data, performance requirements, and how the system will be used in production. How AI Frameworks Work? AI frameworks provide the core infrastructure that makes machine learning development practical. They handle complex operations such as data processing, mathematical computations, model training, hardware acceleration, and deployment workflows. Instead of writing low-level code for GPUs, memory management, and optimization algorithms, developers work with high-level building blocks. This allows teams to focus on model design and business logic while the framework manages performance, scalability, and reliability in the background. In short, frameworks turn AI from an experimental activity into a structured engineering process. Why AI Frameworks Are Essential for Modern Businesses? AI systems must scale, remain stable under heavy workloads, and integrate with cloud platforms and existing software. Frameworks provide standardized tools and practices that make this possible. They help organizations: Reduce development time Maintain consistent model behavior across teams Deploy models reliably into production Scale systems as data and users grow Lower long-term maintenance costs This is why mature frameworks are widely adopted across industries such as finance, healthcare, logistics, and SaaS. They minimize technical risk while enabling faster innovation. Top AI Frameworks Choosing the right AI framework has a direct impact on how quickly your team can build, how well your models perform, and how easily your system scales in production. It also influences long-term maintainability and how smoothly new developers can contribute to the project. Most teams evaluate frameworks based on performance, community support, flexibility across different use cases, and how steep the learning curve is for their developers. Today, the majority of AI systems are built on open-source frameworks. They are cost-effective, highly adaptable, and supported by large global communities. This makes it easier to experiment with new techniques, work with different types of data, and integrate AI into existing platforms without being tied to a single vendor. Below are some of the most widely used open-source AI frameworks shaping real-world AI development in 2026: 1. TensorFlow (Google) – Built for Production-Scale AI TensorFlow continues to be one of the most widely used AI frameworks in 2026, especially for large, production-grade systems. Developed by Google, it provides a complete environment for building, training, and deploying machine learning models across cloud, mobile, and edge devices. Its ecosystem is one of its biggest strengths. Tools like TensorFlow Extended support full MLOps pipelines, TensorFlow Lite enables on-device inference, and TensorFlow.js brings models to the browser. Combined with strong CPU, GPU, and TPU support, this makes TensorFlow a practical choice for organizations running AI at scale. Key features Keras high-level API for faster development Built-in support for distributed training TensorBoard for model monitoring and debugging Production-ready model serving infrastructure Common use cases Image and video recognition systems Natural language processing pipelines Time-series forecasting Enterprise applications in healthcare, finance, logistics, and SaaS TensorFlow is often chosen when teams need to move reliably from experimentation to real-world deployment. Its extensive libraries shorten development cycles for complex models, which is why it’s widely used across Fortune 500 companies. The trade-off is complexity. The learning curve is steeper than some newer frameworks, and debugging can take more effort. But for organizations that prioritize stability, scalability, and long-term maintainability, TensorFlow remains a strong foundation in 2026. 2. PyTorch (Meta) – The Framework of Choice for AI Innovation PyTorch has firmly established itself as the go-to framework for research and rapid experimentation. Developed by Meta AI, it is built around a dynamic computation model and a clean, Python-first interface, which makes writing, testing, and debugging models far more intuitive. This flexibility allows developers to explore new architectures and ideas without fighting the framework. In recent years, PyTorch has also matured on the production side with tools like TorchScript and TorchServe, making it increasingly viable for real-world deployment. Key features Dynamic and intuitive API Native GPU acceleration with CUDA Strong automatic differentiation (autograd) Rich ecosystem including TorchVision, TorchText, and PyTorch Lightning Common use cases Deep learning research and prototyping NLP systems (often combined with Hugging Face Transformers) Computer vision applications Reinforcement learning projects By 2026, PyTorch is expected to be just as common in industry R&D teams as it is in academic research. Its ease of use and fast iteration cycle make it especially attractive to startups and AI-driven product teams building new applications. While TensorFlow has traditionally dominated large enterprise deployments, PyTorch’s production tooling has improved significantly, narrowing that gap. For organizations prioritizing innovation speed and developer productivity, PyTorch has become a leading choice. 3. Keras – High-Level Neural Network API Keras is the go-to choice for teams that want to build deep learning models quickly without dealing with low-level complexity. Now fully integrated into TensorFlow, it serves as its default high-level API. Its modular design makes model creation intuitive, readable, and fast, which is why it remains popular in education, prototyping, and early-stage product development. Key features Clean and concise model-building syntax Built-in layers, activations, and loss functions Runs natively on TensorFlow Common use cases Rapid prototyping Teaching and training ML teams Simple production workloads Keras helps teams move from idea to working model in days, not weeks. When applications need to scale, those models can transition smoothly into TensorFlow’s production environment. 4. scikit-learn – The Foundation of Traditional Machine Learning scikit-learn remains essential for classic machine learning tasks. It offers a reliable

How AI Is Transforming Logistics Operations for US Companies?

How AI Is Transforming Logistics Operations for US Companies? US logistics teams are under pressure from every direction. Fuel costs swing unpredictably. Customer expectations keep tightening. Labor shortages are no longer temporary. At the same time, supply chains are more complex and less forgiving than they were even a few years ago. Here’s the thing. Most logistics leaders are not chasing shiny tech. They want fewer delays, tighter control, and decisions they can trust. That’s why AI adoption in logistics is accelerating. Not as an experiment, but as a practical way to bring clarity and consistency into day-to-day operations. What this really means is simple. AI is moving logistics teams from reactive firefighting to proactive control. Key Logistics Challenges AI Is Solving Before talking about tools, it’s worth grounding this in real problems logistics teams face every day. Route inefficiencies and fuel costs Static routing struggles with traffic patterns, weather, last-minute delivery changes, and driver availability. Small inefficiencies compound fast across large fleets. Demand volatility and forecasting errors Promotions, seasonality, regional demand shifts, and supplier delays make manual forecasting unreliable. Overstocking and stockouts become expensive habits. Warehouse bottlenecks and labor shortages High turnover and uneven workloads slow picking, packing, and dispatch. Even well-run warehouses feel fragile during demand spikes. Shipment delays and lack of visibility When something goes wrong in transit, teams often find out too late. Customers get vague updates, and service teams absorb the frustration. AI steps in where spreadsheets and rule-based systems hit their limits. Core AI Applications in Logistics Operations AI-powered demand forecasting Modern forecasting models learn from historical sales, regional patterns, promotions, weather signals, and even external market data. The result is forecasts that adjust continuously, not once a quarter. For logistics teams, this means better inventory positioning, fewer emergency shipments, and calmer planning cycles. Route optimization and intelligent dispatch AI-based routing engines recalculate routes in real time. They factor in traffic, delivery windows, fuel efficiency, vehicle capacity, and driver hours. Dispatchers move from manual juggling to exception handling. Drivers get realistic routes instead of optimistic ones. Predictive maintenance for fleets Instead of fixed service schedules, AI models analyze sensor data, usage patterns, and maintenance history. They flag likely failures before breakdowns happen. That reduces unplanned downtime, extends vehicle life, and keeps deliveries on schedule. Warehouse automation and inventory optimization AI improves slotting strategies, pick-path optimization, and labor planning. It learns which SKUs move fastest and where congestion builds up during peak hours. Warehouses become more predictable, even with fluctuating order volumes. Real-time shipment tracking and anomaly detection AI systems monitor shipments across carriers and modes. When delays, temperature deviations, or route deviations occur, teams get early alerts. This shifts the response from apologizing after the fact to fixing issues while shipments are still moving. Industry Impact Across US Logistics Segments AI adoption looks different depending on the logistics model, but the impact is consistent. Third-party logistics providers 3PLs use AI to balance capacity across clients, optimize shared networks, and meet strict SLAs without burning out teams. E-commerce fulfillment networks Fast delivery depends on accurate demand signals and tight warehouse execution. AI helps decide where to store inventory and how to route orders profitably. Manufacturing distribution operations AI improves production-aligned logistics, ensuring materials and finished goods move in sync with factory schedules. Cold chain and specialized logistics Temperature-sensitive shipments rely on continuous monitoring. AI detects risk patterns early, reducing spoilage and compliance violations. Across all segments, AI brings consistency where manual processes struggle to scale. Why Custom AI Solutions Matter in Logistics Off-the-shelf tools promise quick wins, but logistics environments are rarely standard. Generic systems often fail because they don’t reflect real constraints like legacy TMS workflows, custom carrier contracts, regional rules, or unique operational priorities. Custom AI solutions matter because they: Integrate directly with existing TMS, WMS, and ERP systems Adapt to how your teams actually work, not how software expects them to Scale as networks grow, routes expand, and data volumes increase Respect data security, compliance, and audit requirements What this really means is AI should fit into operations quietly, without forcing teams to relearn their jobs. Measurable Business Outcomes When AI is implemented with operational discipline, the results are tangible. Logistics organizations commonly see: Shorter delivery times through dynamic routing Lower fuel and transportation costs Higher on-time delivery rates Improved inventory accuracy across locations Better customer satisfaction driven by proactive communication These outcomes matter because they compound. Small gains across routes, warehouses, and fleets add up to meaningful margin improvements. AI Is Now an Operational Requirement AI is no longer a future concept for logistics. It’s becoming part of the baseline for running efficient, reliable operations in the US market. The difference between success and frustration comes down to execution. Strong data foundations, realistic use cases, and solutions built for real-world logistics environments make all the difference. Teams that treat AI as an operational capability, not a tech experiment, are the ones seeing lasting impact. A Practical AI Partner for Modern US Logistics Teams If your logistics operation is dealing with routing complexity, forecasting gaps, warehouse delays, or limited shipment visibility, the right AI strategy can change how your teams operate every day. Ergobite Tech Solutions works closely with US logistics companies to design and implement custom AI systems that fit real operational workflows, integrate with existing platforms, and scale as your network grows. If you’re looking for the best AI ML development company for logistics in the US, start with a conversation. Share your challenges, explore practical AI use cases, and see what’s possible with a focused discovery call. Get AI Insights on This Post: CHat – gpt Perplexity Google AI Grok More than 2 results are available in the PRO version (This notice is only visible to admin users) Most Recent Posts All Posts AI ML Blog Databricks Devops Mobile App Top 10 Best Practices for Building Reliable AI Systems Top 10 AI & ML Frameworks You Can’t Ignore in 2026 How AI Is Transforming Logistics Operations for US

How to Choose the Right AI & ML Development Company in the USA?

How to Choose the Right AI & ML Development Company in the USA? Choosing an AI and machine learning partner is not a technical decision alone. It is a business decision that directly affects costs, timelines, product quality, and long-term scalability. The wrong choice often leads to stalled pilots, models that never reach production, poor integration with existing systems, and budgets burned without measurable outcomes. Here’s the thing. Most AI failures don’t happen because the technology is bad. They happen because the vendor was wrong for the business. This guide is written to help you avoid that. By the end, you’ll know how to evaluate AI and ML development companies in the USA with clarity, ask the right questions, and choose a partner who can actually deliver production-ready results. Understand Your Business Needs First Before comparing vendors, you need internal clarity. AI is not a shortcut. It is an amplifier of whatever systems, data, and processes you already have. Start with real business problems Strong AI initiatives begin with clear outcomes. Reducing operational delays, improving forecasting accuracy, automating manual reviews, or enhancing customer experiences. If a vendor jumps straight into models without understanding the problem, that’s a red flag. Experimentation vs production AI Many companies can build demos. Far fewer can deploy AI that runs reliably in live environments. Production-grade AI requires monitoring, retraining, performance benchmarks, and failure handling. Be clear whether your goal is experimentation or real deployment. Assess data readiness AI depends on data quality, structure, and availability. An experienced partner will evaluate your data pipelines, gaps, and governance before proposing solutions. If this step is skipped, problems show up later when fixes are expensive. Key Factors to Evaluate an AI & ML Development Company in the USA Proven experience with real deployments Look beyond case studies that focus only on ideas. Ask about live systems, measurable outcomes, and post-deployment performance. Experience in taking models from development to production matters more than theoretical expertise. Industry-specific understanding AI in healthcare, fintech, logistics, or retail comes with very different constraints. Industry context affects data sensitivity, compliance, and decision logic. A company that understands your domain will design smarter solutions faster. Technical depth beyond models Strong AI partners combine machine learning with data engineering, cloud infrastructure, APIs, and MLOps. Models don’t exist in isolation. They need pipelines, integrations, and monitoring to stay useful over time. Custom development over templates Off-the-shelf tools can help with simple use cases, but serious business problems usually require custom solutions. Evaluate whether the company builds AI around your workflows or tries to force your business into prebuilt tools. Security, compliance, and data handling In the US market, data privacy and security are non-negotiable. Ask about encryption, access controls, compliance standards, and data ownership. A credible partner will be transparent and precise here. Communication and project management AI projects evolve. Clear documentation, regular updates, and shared accountability matter as much as technical skills. Poor communication often causes more delays than technical challenges. Ability to scale long-term Your AI system should grow with your business. Ask how models are maintained, retrained, and scaled as data volume and usage increase. Long-term thinking separates vendors from true partners. Why Location and US Market Understanding Matter? Working with a company that understands the US business environment brings practical advantages. They are familiar with compliance expectations, enterprise procurement processes, and customer experience standards common in the US market. Time-zone alignment improves collaboration, faster decision-making, and accountability during critical phases. Many companies now choose hybrid delivery models. What matters most is not geography alone, but whether the partner can operate smoothly within US business realities. Questions You Should Ask Before Hiring an AI & ML Partner Use these questions to separate marketing talk from real capability: Can you share examples of AI systems currently running in production? How do you approach data assessment before building models? What happens after deployment if model performance drops? Who owns the data and trained models? How do you handle security and compliance requirements? How do you measure success for AI projects? What does long-term support look like after launch? Clear, confident answers here signal maturity. Common Mistakes to Avoid When Selecting an AI & ML Company Choosing based on cost alone Low upfront pricing often hides future costs. Fixing poorly built AI systems is far more expensive than building them right the first time. Falling for polished demos Demos are easy. Production systems are hard. Always ask how the demo translates into a real environment. Ignoring post-deployment support AI is not set-and-forget. Models need monitoring, updates, and retraining. Lack of support leads to silent failure. Overlooking governance and ownership Unclear ownership of models and data can create legal and operational risks later. Get this clarified early. Choosing an AI Partner Is a Long-Term Business Decision The right AI and ML development company does more than write code. They help you define problems, assess feasibility, design systems that fit your business, and stay accountable for results over time. What this really means is that success comes from alignment. Business goals, data realities, technical execution, and long-term support must work together. When evaluating partners, prioritize clarity, experience, and reliability over buzzwords and flashy promises. Start With a Clear Conversation, Not a Sales Pitch If you’re planning to build AI or machine learning solutions that actually deliver business outcomes, it helps to work with a partner who understands both technology and execution. Ergobite Tech Solutions works with US businesses to design, build, and deploy custom AI and ML solutions aligned with real operational needs. If you’re looking for a trusted AI ML development company in the US, start with a conversation. Share your use case, explore your options, and see what a focused discovery process can uncover before you commit to development. Get AI Insights on This Post: CHat – gpt Perplexity Google AI Grok More than 2 results are available in the PRO version (This notice is only visible to admin users) Most Recent Posts All Posts

How US Businesses Are Using AI & Machine Learning in Real Operations?

How US Businesses Are Using AI & Machine Learning in Real Operations? AI and machine learning are no longer side experiments inside innovation labs. Across the US, they are embedded directly into daily operations, quietly improving efficiency, accuracy, and decision-making. What’s changed is not just the technology, but how businesses apply it. The focus has moved from flashy demos to systems that save time, reduce costs, and scale reliably. Let’s break down how AI and ML are actually being used inside real US businesses today, industry by industry. AI & ML in Real Operations Across Key US Industries 1. Healthcare The problem: Healthcare organizations struggle with rising operational costs, delayed diagnoses, staffing shortages, and fragmented data across systems. How AI is applied: AI models are used to analyze medical images, flag high-risk patients, automate appointment scheduling, and predict patient readmission risks. Machine learning also helps streamline claims processing and detect billing anomalies. Operational impact: Hospitals reduce diagnostic turnaround time, improve patient outcomes, and lower administrative overhead. Clinicians spend less time on paperwork and more time on patient care. If you’re exploring AI for patient risk analysis, diagnostics, or hospital operations, our AI & ML development services for healthcare in the US are built for regulated, real-world environments. 2. Finance & Banking The problem: Traditional banking operations face fraud risks, compliance pressure, and slow manual processes. How AI is applied: Machine learning models monitor transactions in real time to detect fraud, automate credit scoring, and support loan approval workflows. AI-powered chat systems handle routine customer queries securely. Operational impact: Banks reduce fraud losses, improve compliance accuracy, and speed up decision-making without increasing operational headcount. For banks modernizing fraud detection, credit risk, or compliance workflows, our AI & ML solutions for small banks focus on accuracy, security, and audit readiness. 3. Fintech The problem: Fintech companies operate at high transaction volumes and require real-time decisioning with minimal error margins. How AI is applied: AI drives automated underwriting, personalized financial recommendations, payment fraud detection, and dynamic risk assessment. Operational impact: Faster approvals, lower default rates, and highly scalable platforms that handle growth without breaking core systems. If your fintech platform needs real-time decisioning and scalable risk models, explore our AI & ML development services for fintech startups in the US. 4. Retail & E-commerce The problem: Retailers struggle with inventory mismatches, unpredictable demand, and inconsistent customer experiences across channels. How AI is applied: Machine learning predicts demand, optimizes inventory levels, personalizes product recommendations, and adjusts pricing dynamically based on real-time data. Operational impact: Reduced stockouts, higher conversion rates, and better margins without relying on manual forecasting. 5. Manufacturing The problem: Downtime, quality issues, and inefficient production planning impact margins. How AI is applied: AI models analyze sensor data for predictive maintenance, detect defects during quality inspections, and optimize production schedules. Operational impact: Fewer machine failures, lower waste, and smoother production cycles with measurable cost savings. 6. Supply Chain & Logistics The problem: Delays, rising fuel costs, and poor demand visibility disrupt supply chains. How AI is applied: AI optimizes routing, forecasts demand, predicts delays, and improves warehouse automation through intelligent sorting and picking systems. Operational impact: Lower logistics costs, faster deliveries, and better coordination across suppliers, warehouses, and distribution networks. If supply chain visibility, route optimization, or forecasting is a priority, our AI & ML solutions for logistics and supply chain in the US focus on cost control and reliability. 7. Real Estate The problem: Property valuation, lead qualification, and market analysis are time-consuming and data-heavy. How AI is applied: Machine learning models estimate property values, analyze market trends, score leads, and automate document processing. Operational impact: Faster deal cycles, better pricing accuracy, and more efficient sales operations. 8. Insurance The problem: Manual underwriting and claims processing slow down customer experience and increase fraud exposure. How AI is applied: AI automates risk assessment, speeds up claims evaluation using image and data analysis, and flags suspicious claims. Operational impact: Shorter claim settlement times, reduced fraud, and improved customer trust. 9. Marketing & Advertising The problem: Marketing teams face fragmented data, rising acquisition costs, and unclear ROI. How AI is applied: Machine learning analyzes user behavior, predicts conversion likelihood, automates campaign optimization, and personalizes messaging. Operational impact: Higher ROI, better targeting, and smarter budget allocation driven by real performance data. 10. SaaS & Technology Companies The problem: Scaling products while maintaining performance, security, and user satisfaction is complex. How AI is applied: AI improves product analytics, automates customer support, detects anomalies, and enhances user onboarding through behavior-based insights. Operational impact: Improved retention, reduced support load, and more stable platforms as user bases grow. Cross-Functional Use of AI Inside Organizations Beyond industry-specific use cases, AI supports core business functions across departments: Operations: Workflow automation and process optimization Customer experience: Faster support and personalized interactions Forecasting & analytics: Better planning using historical and real-time data Cost control: Identifying inefficiencies and reducing manual effort Risk & compliance: Continuous monitoring and early risk detection What this really means is that AI works best when it’s embedded into existing workflows rather than treated as a standalone tool. Why US Businesses Prefer Custom AI Solutions? Off-the-shelf AI tools can help at a surface level, but they often fall short when applied to complex business operations. Most US businesses operate with unique processes, data structures, and compliance requirements. Custom AI solutions allow companies to: Align AI models with real operational workflows Use proprietary business data securely Integrate seamlessly with existing systems Scale without vendor lock-in This is why many organizations opt for custom AI and ML development over generic platforms. Conclusion AI and machine learning are no longer experimental technologies. They are operational tools driving measurable results across US industries. Businesses that succeed with AI focus on practical use cases, clean data, and execution that fits their real-world processes. The companies seeing the most value are not chasing trends. They are developing AI systems that operate quietly in the background, enhancing decision-making, reducing costs, and scaling operations sustainably. Ready to

Why Small Banks Need AI and ML to Stay Competitive?

Why Small Banks Need AI and ML to Stay Competitive? Small banks operate in a tight space: limited staff, rising digital workloads, and customers who expect fast and accurate service. AI and ML help these institutions work smarter by automating slow tasks, strengthening risk decisions, cutting operational pressure, and improving customer experience. This guide explains the real reasons small banks benefit from AI and how it fits into their daily work. The Real Problem Small Banks Face Small banks don’t lose customers because of poor service. They lose customers because their systems and processes are slow compared to fintechs and large banks. Here’s what slows them down: Loan officers spend hours checking documents Manual KYC increases onboarding time Fraud checks are happening after the incident Every department is dependent on outdated tools Staff stretched between multiple responsibilities Regulators demanding faster, cleaner reporting These challenges impact turnaround time, customer trust, and internal efficiency. AI steps in to remove this pressure. How AI Becomes Useful Instead of “Just Technology”? The best way to understand AI’s value is through real banking moments. Faster loan approvals A small bank usually takes hours or days to review loan applications. Data lives in different systems, and officers must manually verify everything. AI pulls that information together instantly. It reads documents, analyses applicant behavior, and highlights key risks. The result: quicker approvals consistent decisions fewer missed opportunities better lending quality Customers notice the speed. Teams feel the relief. Early fraud detection Fraud is rarely obvious when you look at one transaction. It becomes clear only when you look at patterns — something humans can’t track at scale. AI monitors transactions continuously and flags unusual behavior early, not after a loss. This reduces damage, protects customers, and builds long-term trust. Lower workload for small teams A bank with 20–50 employees cannot spend most of its time on repetitive tasks. AI handles tasks like: document checks compliance validations routine customer queries data organization This frees employees for complex work that requires experience and judgment. Clearer, stronger customer experience When a customer gets fast support, quick loan decisions, and transparent communication, they stay loyal.AI helps small banks deliver exactly that without increasing staff. A Simple Table That Shows the Difference Banking Workflow Before AI After AI Customer onboarding Manual verification, delays Automated checks, faster onboarding Loan processing Hours of review per file Data gathered instantly, quick scoring Fraud monitoring Reactive approach Real-time detection of unusual patterns Compliance reporting Heavy paperwork Auto-organized reports and alerts Customer support Long wait times Intelligent assistants for routine queries This is where small banks feel the impact on day one. Why AI Matters More for Smaller Institutions? Big banks use AI because they have the money.Small banks need AI because they don’t. AI gives them: speed without hiring dozens of new employees safer decisions without expanding risk teams modern customer experience without building huge tech stacks It levels the competition.It reduces operational strain.It helps every department work cleanly and faster. Most importantly, AI helps small banks stay relevant in a market where digital expectations keep increasing. A Practical Way Small Banks Can Start Small banks don’t need to jump into a full-scale AI transformation right away. The smartest approach is to begin with a single area where the impact is both visible and immediate. For most institutions, this usually means automating loan reviews, strengthening fraud detection, or simplifying compliance checks. Once teams experience faster decisions, fewer manual steps, and a lighter workload, it becomes much easier to introduce AI into other parts of the operation. The real success comes from integrating AI into the systems you already rely on, not replacing everything at once. A smooth, steady adoption brings more value than a disruptive overhaul and helps teams adjust naturally while still improving performance across the bank. Conclusion AI and ML aren’t overwhelming technologies. They’re tools that solve long-standing problems for small banks: slow processes, heavy workload, inconsistent decisions, and growing fraud risk. With AI, small banks can deliver faster services, make stronger decisions, and build a modern experience that customers appreciate. The banks that adopt AI now will operate with confidence and clarity. Those who wait may struggle to keep pace with the digital shift happening around them. Partner With a Team That Helps Small Banks Modernize With Confidence If you’re exploring how AI can simplify your daily operations and improve the way your institution serves customers, Ergobite can support you through that journey in a practical, grounded way. As an AI ML software development company for small banks, Ergobite works closely with financial teams to understand their processes, pain points, and regulatory needs before designing solutions that fit naturally into existing systems. The goal isn’t to overwhelm your teams with technology; it’s to make loan reviews faster, fraud checks smarter, compliance easier, and customer experiences smoother. If you want a partner that understands the realities of small-bank operations and can guide you toward meaningful digital transformation, Ergobite brings the technical depth and banking insight needed to make that shift feel simple and achievable. FAQs 1. Why should small banks consider using AI and ML? Small banks face heavy workloads, rising fraud, and higher customer expectations. AI and ML help automate slow tasks, improve risk decisions, and create faster, more reliable banking experiences without increasing staff size. Key Trends & Statistics 2025 The global AI market was estimated at around USD 390 billion in 2025, and is projected to reach approximately USD 3.5 trillion by 2033, representing a compound annual growth rate (CAGR) of about 31.5%. Grand View Research India’s AI market was valued at about USD 9.51 billion in 2024, and is forecast to grow to around USD 130.63 billion by 2032, at a projected CAGR of nearly 39%. Fortune Business Insights Demand for AI and ML roles is surging — for example, in India AI/ML job postings rose 42% year-on-year in June 2025. economictimes.indiatimes.com 2. Is AI only useful for large banks with big budgets? No. AI is especially useful for